Visible and Infrared Image Fusion Based on Group K-SVD

-

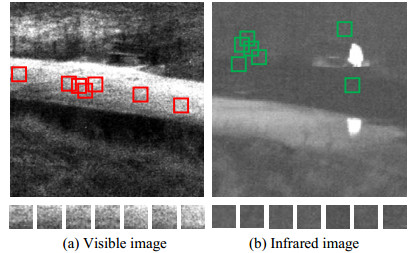

摘要: 传统稀疏表示融合方法,以图像块进行字典训练和稀疏分解,由于没有考虑图像块之间的内在联系,易造成字典原子表征图像特征能力不足、稀疏系数不准确,导致图像融合效果不好。为此,本文提出可见光与红外图像组K-SVD(K-means singular value decomposition)融合方法,利用图像的非局部相似性,将相似图像块构造成图像结构组矩阵,通过组K-SVD进行字典训练和稀疏分解,可以有效提高字典原子的表征能力及稀疏系数的准确性。实验结果表明,该方法在主观和客观评价上都优于传统稀疏融合方法。Abstract: In the traditional image fusion method based on sparse representation, image blocks are used as units for dictionary training and sparse decomposition. The representation ability of dictionary atoms for image features is insufficient if the internal connection between the image blocks is not considered. Moreover, the sparse coefficients are inaccurate. Therefore, a fused image is not desirable. In view of the abovementioned problem, this paper proposes a fusion method based on the group K-means singular value decomposition (K-SVD) for visible and infrared images. Considering the image non-local similarity, this method constructs a structure group matrix using similar image blocks, and then, dictionary training and sparse decomposition are performed in the units of the structure group matrix by group K-SVD. Thus, this method can effectively improve the representation ability of dictionary atoms and the accuracy of the sparse coefficients. The experimental results show that this method is superior to the traditional sparse fusion method in terms of subjective and objective evaluation.

-

Key words:

- image fusion /

- non-local similarity /

- structure group matrix /

- group K-SVD

-

表 1 “Nato_camp”图像融合客观评价指标

Table 1. Objective evaluation index of Nato_camp images

Method Q0 Qw Qe Qab/f SR 0.5857 0.7322 0.7151 0.3216 ASR 0.5966 0.7617 0.7439 0.4673 JSR 0.5649 0.7311 0.7140 0.3760 The proposed 0.5926 0.7737 0.7556 0.4871 表 2 “Kaptein”图像融合客观评价指标

Table 2. Objective evaluation index of Kaptein images

Method Q0 Qw Qe Qab/f SR 0.5759 0.7365 0.7193 0.2489 ASR 0.5760 0.7659 0.7480 0.4206 JSR 0.5445 0.7231 0.7062 0.3400 The proposed 0.5794 0.7941 0.7755 0.4807 表 3 “Duine”图像融合客观评价指标

Table 3. Objective evaluation index of Duine images

Method Q0 Qw Qe Qab/f SR 0.6426 0.8760 0.8555 0.2463 ASR 0.6781 0.9247 0.9031 0.5604 JSR 0.3215 0.7440 0.7266 0.2091 The proposed 0.6750 0.9312 0.9094 0.4783 表 4 “Road”图像融合客观评价指标

Table 4. Objective evaluation index of road images

Method Q0 Qw Qe Qab/f SR 0.6909 0.7994 0.7807 0.5101 ASR 0.6941 0.8053 0.7865 0.6073 JSR 0.6346 0.7763 0.7581 0.5210 The proposed 0.6924 0.8099 0.7909 0.5815 表 5 不同融合方法评价指标的平均值

Table 5. The average evaluation for different fused methods

Method Q0 Qw Qe Qab/f SR 0.6238 0.7860 0.7677 0.3276 ASR 0.6358 0.8156 0.7965 0.5066 JSR 0.5264 0.7436 0.7262 0.3544 The proposed 0.6349 0.8272 0.8079 0.5053 -

[1] MA J, MA Y, LI C. Infrared and visible image fusion methods and applications: A survey[J]. Information Fusion, 2019, 45: 153-178. doi: 10.1016/j.inffus.2018.02.004 [2] LI S, KANG X, FANG L, et al. Pixel-level image fusion: a survey of the state of the art[J]. Information Fusion, 2017, 33: 100-112. doi: 10.1016/j.inffus.2016.05.004 [3] Elguebaly T, Bouguila N. Finite asymmetric generalized Gaussian mixture models learning for infrared object detection[J]. Computer Vision and Image Understanding, 2013, 117(12): 1659-1671. doi: 10.1016/j.cviu.2013.07.007 [4] LI H, DING W, CAO X, et al. Image registration and fusion of visible and infrared integrated camera for medium-altitude unmanned aerial vehicle remote sensing[J]. Remote Sensing, 2017, 9(5): 441. doi: 10.3390/rs9050441 [5] LI S, YANG B, HU J. Performance comparison of different multi-resolution transforms for image fusion[J]. Information Fusion, 2011, 12(2): 74-84. doi: 10.1016/j.inffus.2010.03.002 [6] FU Z, WANG X, XU J, et al. Infrared and visible images fusion based on RPCA and NSCT[J]. Infrared Physical Technology, 2016, 77: 114-123. doi: 10.1016/j.infrared.2016.05.012 [7] ZHANG Q, LIU Y, Blum R, et al. Sparse representation based multi-sensor image fusion for multi-focus and multi-modality images: a review[J]. Information Fusion, 2018, 40: 57-75. doi: 10.1016/j.inffus.2017.05.006 [8] ZHANG Z, XU Y, YANG J, et al. A survey of sparse representation: Algorithms and Applications [J]. IEEE Access, 2015(3): 490-530. http://ieeexplore.ieee.org/xpl/articleDetails.jsp?arnumber=7102696 [9] YANG B, LI S. Multifocus image fusion and restoration with sparse representation[J]. IEEE Transactions on Instrumentation and Measurement, 2010, 59(4): 884-892. doi: 10.1109/TIM.2009.2026612 [10] YU N, QIU T, BI F, et al. Image features extraction and fusion based on joint sparse representation[J]. IEEE Journal of Selected Topics in Signal Processing, 2011, 5(5): 1074-1082. doi: 10.1109/JSTSP.2011.2112332 [11] LIU Y, WANG Z. Simultaneous image fusion and denoising with adaptive sparse representation[J]. IET Image Processing, 2014, 9(5): 347-357. http://ieeexplore.ieee.org/document/7095698 [12] LIU Y, LIU S, WANG Z. A general framework for image fusion based on multi-scale transform and sparse representation[J]. Information Fusion, 2015, 24: 147-164. doi: 10.1016/j.inffus.2014.09.004 [13] WANG Z, YANG F, PENG Z, et al. Multi-sensor image enhanced fusion algorithm based on NSST and top-hat transformation[J]. Optik, 2015, 126(23): 4184-4190. doi: 10.1016/j.ijleo.2015.08.118 [14] WANG Z, XU J, JIANG X, et al. Infrared and visible image fusion via hybrid decomposition of NSCT and morphological sequential toggle operator[J]. Optik, 2020, 201: 163497. doi: 10.1016/j.ijleo.2019.163497 [15] 杨风暴. 红外偏振与光强图像的拟态融合原理和模型研究[J]. 中北大学学报: 自然科学版, 2017, 38(1): 1-7. doi: 10.3969/j.issn.1673-3193.2017.01.001YANG Fengbao. Research on Theory and Model of Mimic Fusion Between Infrared Polarization and Intensity Images[J]. Journal of North University of China, 2017, 38(1): 1-7. doi: 10.3969/j.issn.1673-3193.2017.01.001 [16] LI H, WU X J. DenseFuse: A Fusion Approach to Infrared and Visible Images[J]. IEEE Transaction Image Processing, 2019, 28(5): 2614-2623. doi: 10.1109/TIP.2018.2887342 [17] MA J, YU W, LIANG P, et al. Fusion GAN: A generative adversarial network for infrared and visible image fusion[J]. Information Fusion, 2019, 48: 11-26. doi: 10.1016/j.inffus.2018.09.004 [18] 姜晓林, 王志社. 可见光与红外图像结构组双稀疏融合方法研究[J]. 红外技术, 2020, 42(3): 272-278. http://hwjs.nvir.cn/article/id/hwjs202003010JIANG Xiaolin, WANG Zhishe. Visible and Infrared Image Fusion Based on Structured Group and Double Sparsity[J]. Infrared Technology, 2020, 42(3): 272-278. http://hwjs.nvir.cn/article/id/hwjs202003010 [19] DONG W, LEI Z, SHI G. Nonlocally centralized sparse representation for image restoration[J]. IEEE Transactions on Image Processing, 2012, 22(4): 1620-1630. http://ieeexplore.ieee.org/document/6392274/ [20] ZHANG J, ZHAO D, GAO W. Group-based sparse representation for image restoration[J]. IEEE Transactions on Image Processing, 2014, 23(8): 3336-3351. doi: 10.1109/TIP.2014.2323127 [21] WANG Z, Bovik A, A universal image quality index[J]. IEEE Signal Processing Letters. 2002, 9(3): 81–84. doi: 10.1109/97.995823 [22] Piella G, Heijmans H. A new quality metric for image fusion[C]//Proceedings of the 10th International Conference on Image Processing, 2003: 173-176. [23] Xydeas C. S, Petrovic V, Objective image fusion performance measure[J]. Electronics Letters, 2000, 36(4): 308-309. doi: 10.1049/el:20000267 -

下载:

下载: